Ostrich economics

June 5, 2014

from Lars Syll

Deductivist modeling endeavours and an overly simplistic use of statistical and econometric tools are sure signs of the explanatory hubris that still haunts neoclassical mainstream economics.

In a recent interview Robert Lucas says

the evidence on postwar recessions … overwhelmingly supports the dominant importance of real shocks.

So, according to Lucas, changes in tastes and technologies should be able to explain the main fluctuations in e.g. the unemployment that we have seen during the last six or seven decades. But really — not even a Nobel laureate could in his wildest imagination come up with any warranted and justified explanation solely based on changes in tastes and technologies.

The Chicago übereconomist is simply wrong. But how do we protect ourselves from this kind of scientific nonsense? In The Scientific Illusion in Empirical Macroeconomics Larry Summers has a suggestion well worth considering — not the least since it makes it easier to understand how mainstream neoclassical economics actively has contributed to causing today’s economic crisis rather than to solving it:

Modern scientific macroeconomics sees a (the?) crucial role of theory as the development of pseudo worlds or in Lucas’s (1980b) phrase the “provision of fully articulated, artificial economic systems that can serve as laboratories in which policies that would be prohibitively expensive to experiment with in actual economies can be tested out at much lower cost” and explicitly rejects the view that “theory is a collection of assertions about the actual economy” …

A great deal of the theoretical macroeconomics done by those professing to strive for rigor and generality, neither starts from empirical observation nor concludes with empirically verifiable prediction …

The typical approach is to write down a set of assumptions that seem in some sense reasonable, but are not subject to empirical test … and then derive their implications and report them as a conclusion. Since it is usually admitted that many considerations are omitted, the conclusion is rarely treated as a prediction …

However, an infinity of models can be created to justify any particular set of empirical predictions … What then do these exercises teach us about the world? … If empirical testing is ruled out, and persuasion is not attempted, in the end I am not sure these theoretical exercises teach us anything at all about the world we live in …

Reliance on deductive reasoning rather than theory based on empirical evidence is particularly pernicious when economists insist that the only meaningful questions are the ones their most recent models are designed to address. Serious economists who respond to questions about how today’s policies will affect tomorrow’s economy by taking refuge in technobabble about how the question is meaningless in a dynamic games context abdicate the field to those who are less timid. No small part of our current economic difficulties can be traced to ignorant zealots who gained influence by providing answers to questions that others labeled as meaningless or difficult. Sound theory based on evidence is surely our best protection against such quackery.

Related

The scientific illusion of ‘modern’ macroeconomicsIn “The Economics Profession”

The instability of financial markets (ii). Deductivism leads economists astray

A 1963 critique of neoclassical economics. Did anything change?

US poverty rate, actual and simulated, 1959 – 2012 (graph)

from David Ruccio

One of the points Thomas Piketty makes in his new book is that mainstream economists enshrined as “laws” of capitalist development certain “facts” that only had relevance during the immediate postwar decades.

These so-called laws included constant capital and labor shares and declining inequality. We now know they were no more than artifacts of a particular period of capitalist development for some countries (including the United States). Things began to change radically in the mid- to late-1970s for those same countries (again, including the United States).

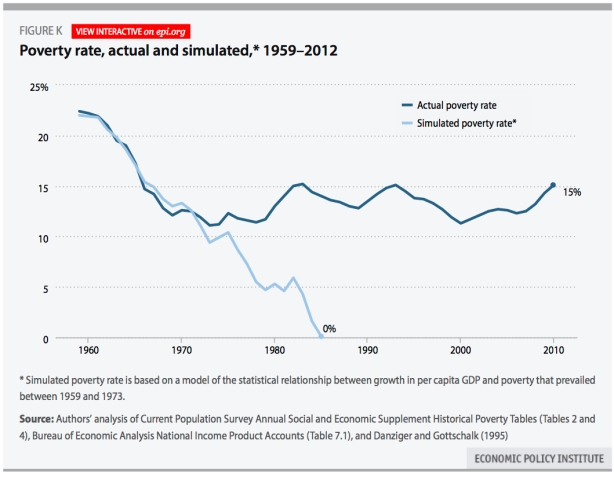

The same is true, as it turns out, of the relationship between economic growth and poverty. As the Economic Policy Institute (pdf) explains,

Economic growth used to be associated with significant poverty reductions, but since the 1970s the benefits of aggregate growth for lowering poverty have largely stalled. The figure compares the actual poverty rate with a simulated poverty rate based on a model of the statistical relationship between growth in per capita gross domestic product (GDP) and poverty that prevailed between 1959 and 1973. The model forecasts poverty quite accurately through the mid-1970s. Since then, the actual poverty rate stopped falling and has instead fluctuated cyclically within 4 percentage points above its trough in 1973.

However, the simulated poverty rate shows that if the relationship between per capita GDP growth and poverty that prevailed from 1959 to 1973 (wherein poverty dropped as the country, on average, got richer) had held, the poverty rate would have fallen to zero in the mid-1980s. Therefore, broadly shared prosperity could have led to a near eradication of poverty in the United States, but it did not.

So, the next time someone exclaims that the solution to poverty is more economic growth, explain to them that trickle-down economics, even if it was valid grosso modo for the immediate postwar period, has not worked for those at the bottom of the distribution of income for many decades.

And it certainly doesn’t

Earning less than your your older siblings and nephews and nieces is getting increasingly likely, though less so for women and blacks. This is not mainly a sectoral composition effect.

Workers’ wages are the most important component of household money income. Representing about 83 percent of total household income, they are the driving force behind changes in income growth and inequality. Consequently, changes in wages play an important role in determining trends in income inequality, household spending, and the overall welfare of households. In the last 20 years, while nominal wages have shown a consistent and upward trend (Figure 1), real wages have progressed much more slowly … after a long period (beginning in 1973) of stagnant real wages, in the late 1990s low unemployment rates, increases in the minimum wage, and improvements in labor productivity contributed to a boost in wages, which translated into 12.4 percent cumulative growth in real wages from the late ‘90s until 2002. Partially resulting from a weak economic recovery after the 2001 recession, real wages then stagnated despite continued growth in labor productivity … real wages have shown practically no improvement (1 percent growth) since 2002. The period between 2002 and 2013 has become known as the decade of flat wages … . However, over the same period of time … there were significant changes in the composition of the labor market. In particular, the labor force is aging and becoming more educated. In addition … workers are delaying retirement. Increases in age, experience, and education (which are all positively correlated with wages) could in fact be propping up observed real wages…. This is exactly what we uncover… what appears to have been a decade of flat real wages was actually a decade of declining real wages within age/education worker profiles.

and:

changes in the composition of industries and occupations contributed to the observed trends: the labor market has been slowly shifting toward higher-wage occupations and industries. Holding only occupation and industry at their 1994 levels would have resulted in slower real wage growth, but industry and occupation changes were not the driving force behind the counterfactual real wage declines

The US jobs-gap (3 graphs)

June 8, 2014David F. RuccioLeave a commentGo to comments

from David RuccioWith 217,000 new jobs created in May, the U.S. economy is finally—finally, after 50 months!—back to the pre-recession employment level.

Except it isn’t. Not by a long shot. Not when we consider the “jobs gap”—which we can calculate in one of two ways: by the amount of time it will take at this rate to get back to pre-recession employment levels while also absorbing the people who enter the labor force each month (4 years) or by the difference between payroll employment and the number of jobs needed to keep up with the growth in the potential labor force (6.9 million jobs).

And that’s not even considering the kinds of jobs that have been created or the pay for those jobs or the percentage of the unemployed how have been without a job for 27 weeks or more.

Or, for that matter, the fact that all those how have been lucky enough to keep their jobs or to get a new job are forced to have the freedom to work for a small number of employers who are able to capture and do what they will with the profits their workers create.

CEO-to-worker compensation ratio, USA 1965 – 2013

from David Ruccio

In charting the amount of the surplus that ends up in the hands (or, if you prefer, pockets or bank accounts) of CEOs, the Economic Policy Institute finds that:

- Average CEO compensation was $15.2 million in 2013, using a comprehensive measure of CEO pay that covers CEOs of the top 350 U.S. firms and includes the value of stock options exercised in a given year, up 2.8 percent since 2012 and 21.7 percent since 2010.

- From 1978 to 2013, CEO compensation, inflation-adjusted, increased 937 percent, a rise more than double stock market growth and substantially greater than the painfully slow 10.2 percent growth in a typical worker’s compensation over the same period.

- The CEO-to-worker compensation ratio was 20-to-1 in 1965 and 29.9-to-1 in 1978, grew to 122.6-to-1 in 1995, peaked at 383.4-to-1 in 2000, and was 295.9-to-1 in 2013, far higher than it was in the 1960s, 1970s, 1980s, or 1990s.

- If Facebook, which they exclude from their data due to its outlier high compensation numbers, were included in the sample, average CEO pay was $24.8 million in 2013, and the CEO-to-worker compensation ratio was 510.7-to-1.

Piketty, plutonomists, and the legal framework

from Edward Fullbrook

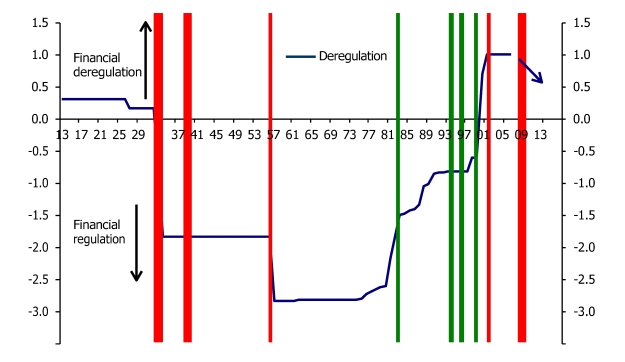

Plutonomists, like real-world economists, know that the main determinant of income and wealth distribution is the legal framework in which an economy functions. The Plutonomy Movement, by far the most powerful political force of our age, is founded on the underground application of this basic principle. Occasionally this becomes manifest when one of plutonomy’s strategic documents is leaked. Such an event happened last week when the Bank of American Merrill Lynch report “Piketty and Plutonomy: The revenge of inequality” found its way into non-plutonomist hands. In addition to posts on this blog, there was coverage in the Chinese and the Australian press and Brad Delong posted an excerpt from the report including three graphs. Here is another excerpt, very brief, and number 42 of the report’s 45 figures. Red indicates “regulatory legislation” and green “deregulatory legislation”.

Financial re-regulation will likely put a damper on the incomes of some finance professionals, reducing income inequality at the level of the top 0.1% of households (>US$1.5mn income) but not for the top 0.01% households (>US$7.2mn income). However, the vast growth in global financial wealth projected by Piketty, both in EMs and DMs, will likely boost income inequality at the top 0.01% level, driven by astute capital/wealth managers like hedge funds and private equity exploiting scale economies.

Drawing on our earlier work, and the research of Thomas Philippon and Ariell Reshef we highlight the importance of financial de-regulation in engendering plutonomy. Figure 42 delineates the history of financial regulation in the USA. (emphasis added)

A history of financial regulation and deregulation

The report’s lead author, Ajay Kapur, is one of Plutonomy’s key strategists. Recently Brad Delong posted on his website three of Kapur’s Citigroup reports from about ten years ago. Here are the links:

And here is the ”The Plutonomism Manifesto”

Piketty phenomena – “Riding a Wave of Growth: Global Wealth 2014” (chart)

from Edward Fullbrook

The Boston Consulting Group (BCG) is a leading player in what is called “the global wealth-management industry” and which in effect is plutonomy’s tactical cavalry in financial markets. BCG have just “released” a report disclosing that in the year 2013 the wealth of the world’s people worth $100 million or more increased 19.7 percent. That compares, they say, to 3.7 percent for the wealth of sub-millionaires. Naturally they are overjoyed at this latest redistribution.

“Wealth” meaning what? Like most people and as also with the symbol “capital”, they use “wealth” to stand for two different things, and also like most people, economists especially, they often lose track of which referent they are trying to talk about. But we can overlook that here because the 19.7 percent and 3.7 percent refer to financial wealth and, with exceptions, that is all plutonomists and their agents really care about.

The report documents how the upward redistribution of wealth to the 0.1 percent and especially to the 0.01 percent is accelerating, in other words, how the plutonomist programme (pre-Piketty it was never reported by mass media) is now restructuring the world at an even faster rate. Here is a taster of how they see the next five years.

Wealth held by all segments above $1 million is projected to grow by at least 7.7 percent per year through 2018, compared with an average of 3.7 percent per year in segments below $1 million. Ultra-high-net-worth (UHNW) households, those with $100 million or more, held $8.4 trillion in wealth in 2013 (5.5 percent of the global total), an increase of 19.7 percent over 2012. At an expected CAGR [compounded average growth rate] of 9.1 percent over the next five years, UHNW households are projected to hold $13.0 trillion in wealth (6.5 percent of the total) by the end of 2018.

Wealth managers must develop winning client-acquisition strategies and differentiated, segment-specific value propositions in order to succeed with HNW and UHNW clients and meet their ever-increasing needs.

The industry’s top client nations are of course those with the most billionaires, and they list the top five as follows, the numbers to the right being the number of billionaire households in 2013

- United States 4,754

- United Kingdom 1,044

- China 983

- Germany 881

- Russia 536

The report’s Exhibit 2 is especially interesting as it compares the growth rate of GDP and the growth rate of financial wealth. The numbers in ( ) refer to 2012, and the others to 2013.

from David Ruccio

A couple of weeks back I wrote that, when mainstream economists debate the causes of inflation, they focus only on labor costs and forget all about profits.

It’s as if the only cost of production is the price of labor, and the price of capital is entirely irrelevant. Therefore, if and when wages rise, they expect the overall level of prices to go up. In other words, the presumption is that capital will get its “normal” rate of return, which can only be safeguarded from wage increases by raising the price of output.

Well, today on the Wall Street Journal web site, Josh Bivens pushes back and argues that

what’s really striking about price growth since the end of the Great Recession is how much of it has been driven by risingprofits, not rising labor costs. In fact, labor costs have been essentially flat between the end of the Great Recession and the first quarter of 2014. Profits earned per unit sold, on the other hand, have been rising at an average annual growth rate of nearly 9% since the recovery’s beginning. To the degree that there is any inflationary pressure in the U.S. economy over that time, it is surely not coming from labor costs. . .

prices in the non-financial corporate sector rose an average of 1% per year since the end of the Great Recession. But fully 100% of this increase can be explained by rising unit profits. Unit labor costs can account for 8% of price growth over this period while the other influences account for negative by 8% of the rise

We need a new way to teach economics

June 5, 2014

from John Komlos

Remember the walkout of students from their Principles of Economics class at Harvard a couple of years ago in solidarity with the “Occupy” movement?

They thought that the economics they were being taught was doctrinaire, failed to provide a balanced perspective on the real existing economy, and did not show sufficient empathy for the 45 million people living in poverty. No wonder, the economics being taught on blackboards in almost all classrooms makes it appear as though markets descended straight from heaven while maintaining a conspiracy of silence on the Achilles heals of free markets such as not paying sufficient attention to safety, not caring enough about the environment, and being indifferent to the welfare of future generations.

Now John Cassidy reports in the New Yorker that a group of students in 16 countries are pushing back on the arrogance of mainstream economists and are demanding that a more realistic economics be taught with fewer abstractions, less emphasis on mathematical methods of problem solving, and more attention devoted to the plight of the underclass.

In most classrooms free markets become God’s gift to humanity, government is the boogeyman, and taxation is a simple burden on society’s well-being. What nonsense! Taxes are used to finance schools, basic research, and infrastructure and markets go haywire without adequate government backstop as the recent “mother of all financial crisis” so amply demonstrated. But professional economists are immune to such real-world evidence. After all, the models work perfectly well on the blackboard.

But the models are so simplistic that they present a caricature of the real existing economy. Charles Ferguson in his Oscar-winning documentary “Inside Job” demonstrated most vividly the culpability of academic economists. The Federal Reserve in DC has no less than 300 PhD economists working for it, yet they were incapable of seeing the crisis brewing for years. Presumably those who dared to disagree with Greenspan’s ideology that bubbles were nothing to worry about and that markets worked perfectly well without government regulation became outcasts.

That was exactly the way Brooksley Born, who was the single top-ranking government official with sufficient common sense to attempt to regulate derivatives, was bullied until she resigned. And the warnings of economists like Hyman Minsky, who had been warning of the inherent instability of the financial sector, were anathema to people like Ben Bernanke. Ben mentioned him on occasion in uncivil tones which sufficed to banish Minsky from the classrooms and textbooks of this country. Such censorship American style belies the ideal of the university of being open to dissenting viewpoints.

So don’t be surprised that students around the world demand a more colorful palate of perspectives. After all, markets are man-made institutions. So the human element with its emotions and complex psychology should be an integral part of the discipline. These students do not want economics to become a branch of mathematics.

Greenspan’s congressional confession, that he made a mistake and that his ideology was wrong made absolutely no impression on academic economists. They continue to disregard not only Minsky but other major economists who dissent from the mainstream’s depiction of homo oeconomicus, such as John K. Galbraith, Th. Veblen, H. Simon, and–believe it or not–when I took macroeconomics in graduate school from Nobel-prize winning economist Robert Lucas, even the name of J.M. Keynes—arguably the greatest economist of the 20th century–was banned from discussion. So if nonconformists are banned from the classroom how are students going to get a balanced overview to the subject?

This is particularly important for students of Principles of Economics because there are more than a million of them every year and most of them do not take another economics course at all. So all they are exposed to is the mainstream’s view that super rationality reigns in the market inhabited by consumers with sufficient brain power to know every detail of the economy and therefore are not satisfied with anything less than achieving an optimum outcome. They possess perfect understanding of all the nuances in small print and perfect foresight from the beginning to the end of their lives and are not inhibited by the challenges of information overload insofar as information is free, available instantaneously, and a cinch to understand. So the market works perfectly well on the blackboard. What the students are demanding is that it work as well in real life and not only in Fairfax County, VA but also in Harlem, NY.

Piketty’s assault on silly neoclassical economic models

from Lars Syll

The French economist Thomas Piketty arrived in Washington, D.C., on Sunday for a week of talks at some of the nation’s leading policy-research centers but which might as well have been billed as a victory lap up the East Coast. The English translation of Piketty’s new book, Capital in the Twenty-first Century, a formidably rigorous, 700-page history of wealth, out barely five weeks, had just made The New York Times’s best-seller list. But even before it appeared, on the strength of a handful of advance reviews and a surge of Internet buzz, Piketty’s transformation was complete: from respected researcher on income distribution to ranking heavyweight, a scholar who, armed with reams of data and charts—and, unusual for an economist, a gilded tongue—proposed to upend decades of mainstream wisdom on inequality though an unprecedented analysis of the past.

Apparently bedazzled by the book’s arguments, few reviewers mentioned its assault on the field. Yet Piketty’s disdain is unmistakable, the lament of a scholar long estranged from the mainstream of his profession. “For far too long,” he writes, “economists have sought to define themselves in terms of their supposedly scientific methods. In fact, those methods rely on an immoderate use of mathematical models, which are frequently no more than an excuse for occupying the terrain and masking the vacuity of the content. Too much energy has been and still is being wasted on pure theoretical speculation without a clear specification of the economic facts one is trying to explain or the social and political problems one is trying to resolve” …

In one sense, critiques of the discipline are nothing new. Economists, a voluble lot, seem to occupy a disproportionate amount of the blogosphere, and spend a good deal of their time there engaged in heated methodological debate. In a high-profile spat in March, Paul Krugman and Lars P. Syll, an economist at Malmö University, in Sweden, posted rival views of IS-LM (for investment saving-liquidity money), a model that has been a mainstay of macroeconomic theory for decades. Syll dismissed IS-LMas a “brilliantly silly gadget.” Krugman defended it as “a simplification of reality designed to provide useful insight into particular questions. And since 2008 it has done that job, yes, brilliantly.” (In a follow-up post, Krugman was more circumspect: “You should use models, but you should always remember that they’re models, and always beware of conclusions that depend too much on the simplifying assumptions.” )

Still, it’s one thing to trade barbs online, and quite another to present your magnum opus as an act of methodological sedition. Capital in the Twenty-first Century, Piketty makes clear, is his notion of what economics scholarship should look like: combining analyses of macro (growth) and micro (income distribution) issues; grounded in abundant empirical data; larded with references to sociology, history, and literature; and sparing on the math. In its scale and scope, the book evokes the foundational works of classical economics by Ricardo, Malthus, and Marx—to whose treatise on capitalism Piketty’s title alludes. The sizable recent literature on various aspects of inequality earns barely a mention. “There is a fair amount of empirical work out there,” says James K. Galbraith, of the University of Texas at Austin who studies wage inequality and who published one of the few skeptical reviews of the book to date, in Dissent. “He has a tendency to make deferential reference to mainstream thinkers while ignoring the critiques that already exist” …

Whether Capital in the Twenty-first Century survives its spectacular debut to become an inspiration for future scholarship—let alone future policy—will depend in part on how Piketty’s data and interpretation hold up over time … Even detractors agree that the World Top Incomes Database, which Piketty and his collaborators have assembled at the Paris School of Economics, where he now teaches, is invaluable. Covering 30 countries to date, it is by far the largest international database on inequality.

Less likely to endure is Piketty’s remedy for inequality: a progressive global wealth tax on fortunes over 1-million euros.

In Washington, a policy town, remedies were what many of Piketty’s commentators wanted to talk about, and they tended to dismiss his proposal, while taking the opportunity to promote their own ideas instead. Even Piketty concedes that enforcing a global wealth tax would require unprecedented levels of international cooperation and, at least in the United States, where higher taxes are widely believed to lead to lower growth, overcoming entrenched political opposition.

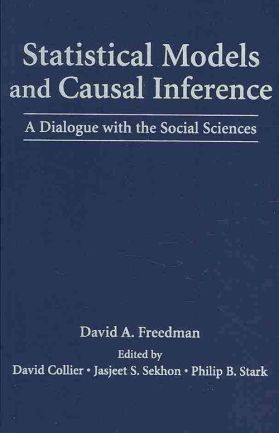

If you only have time to read one statistics book — this is the one!

June 25, 2014

from Lars Syll

Mathematical statistician David A. Freedman‘s Statistical Models and Causal Inference (Cambridge University Press, 2010) is a marvellous book. It ought to be mandatory reading for every serious social scientist – including economists and econometricians – who doesn’t want to succumb to ad hoc assumptions and unsupported statistical conclusions!

How do we calibrate the uncertainty introduced by data collection? Nowadays, this question has become quite salient, and it is routinely answered using wellknown methods of statistical inference, with standard errors, t -tests, and P-values … These conventional answers, however, turn out to depend critically on certain rather restrictive assumptions, for instance, random sampling …

How do we calibrate the uncertainty introduced by data collection? Nowadays, this question has become quite salient, and it is routinely answered using wellknown methods of statistical inference, with standard errors, t -tests, and P-values … These conventional answers, however, turn out to depend critically on certain rather restrictive assumptions, for instance, random sampling …

Thus, investigators who use conventional statistical technique turn out to be making, explicitly or implicitly, quite restrictive behavioral assumptions about their data collection process … More typically, perhaps, the data in hand are simply the data most readily available …

The moment that conventional statistical inferences are made from convenience samples, substantive assumptions are made about how the social world operates … When applied to convenience samples, the random sampling assumption is not a mere technicality or a minor revision on the periphery; the assumption becomes an integral part of the theory …

In particular, regression and its elaborations … are now standard tools of the trade. Although rarely discussed, statistical assumptions have major impacts on analytic results obtained by such methods.

Consider the usual textbook exposition of least squares regression. We have n observational units, indexed by i = 1, . . . , n. There is a response variable yi , conceptualized as μi + i , where μi is the theoretical mean of yi while the disturbances or errors i represent the impact of random variation (sometimes of omitted variables). The errors are assumed to be drawn independently from a common (gaussian) distribution with mean 0 and finite variance. Generally, the error distribution is not empirically identifiable outside the model; so it cannot be studied directly—even in principle—without the model. The error distribution is an imaginary population and the errors i are treated as if they were a random sample from this imaginary population—a research strategy whose frailty was discussed earlier.

Usually, explanatory variables are introduced and μi is hypothesized to be a linear combination of such variables. The assumptions about the μi and i are seldom justified or even made explicit—although minor correlations in the i can create major bias in estimated standard errors for coefficients …

Why do μi and i behave as assumed? To answer this question, investigators would have to consider, much more closely than is commonly done, the connection between social processes and statistical assumptions …

We have tried to demonstrate that statistical inference with convenience samples is a risky business. While there are better and worse ways to proceed with the data at hand, real progress depends on deeper understanding of the data-generation mechanism. In practice, statistical issues and substantive issues overlap. No amount of statistical maneuvering will get very far without some understanding of how the data were produced.

More generally, we are highly suspicious of efforts to develop empirical generalizations from any single dataset. Rather than ask what would happen in principle if the study were repeated, it makes sense to actually repeat the study. Indeed, it is probably impossible to predict the changes attendant on replication without doing replications. Similarly, it may be impossible to predict changes resulting from interventions without actually intervening.

Undergraduates at Manchester University propose overhaul of orthodox teachings to embrace alternative theoriesIn “students”

Miscellaneous #1: Public debate; James Galbraith; etc.In “The Economics Profession”

Occupy the teaching of economicsIn “Economics Curriculum”