from Edward Fullbrook

For me three economists stand out historically as having been the most effective at building resistance to the dominance of scientism in economics. Keynes of course is one, and the other two are Bernard Guerrien and Tony Lawson, Guerrien because he was the intellectual and moral force behind Autisme Economie which, among other things, gave rise to the RWER; and Lawson because his papers, books and seminars have inspired, joined and intellectually fortified thousands.

It is notable that all three of these economists were or were on their way to becoming professional mathematicians before switching to economics. When still in his twenties, Keynes’ mathematical genius was already publicly celebrated, most notably by Whitehead and Russell, and he had already published what was to become for his first discipline a classic work. Guerrien’s first PhD was in mathematics, and Lawson was doing a PhD in mathematics at Cambridge when its economics department lured him over in an attempt to boost its mathematical competence.

The significance for me of Keynes, Guerrien and Lawson being mathematicians first and economists second is that it meant that they were not even for an hour taken in or intimidated by the aggressive scientism of neoclassical economists, and this has enabled them to write analytically about the dominant scientism with a quiet straightforwardness that is beyond the reach of most of us.

An example of this kind of writing that I am talking about is the short essay below that in 2002 Guerrien published in what is now the Real-World Economics Review.

Is There Anything Worth Keeping in Standard Microeconomics?

Bernard Guerrien (Université Paris I, France)

The French students’ movement against autism in economics started with a revolt against the disproportionate importance of microeconomics in economic teaching. The students complained that nobody had really proved to them that microeconomics was of any use; what is the interest of going through “micro1”, “micro2”, “micro3”, etc., using lots of mathematics to speak of fictitious households, fictitious enterprises and fictitious markets?

Actually, when one thinks about it, it turns out that microeconomics is simply “neoclassical theory”. Realizing this, I agree with the French students when they say that :

- In a course on economic theories, neoclassical theory should be taught alongside other economic theories (classical political economy, marxist theory, keynesian theory, etc.) showing that it is just one among several other approaches;

- The principal elements and assumptions of neoclassical theory (consumer and producer choice, general equilibrium existence theorems, and so on) should be taught with very little mathematics (or with none at all). The main reason being that it is essential for students to understand the economic meaning of assumptions made in mathematical language. As they study economics, and not mathematics, students must decide if these assumptions are relevant, or meaningful. But, for that, assumptions must be expressed in clear English and not in abstruse formulas. Only if assumptions, and models, are relevant, can it be of any interest to try to see what “results” or “theorems” can be deduced from them.

I am convinced that assumptions of standard microeconomics are not at all relevant. And I think that it is nonsense to say – as some people do (using the “ as if ” argument) – that relevant results can be deduced from assumptions that obviously contradict almost everything that we observe around us.

The main reason why the teaching of microeconomics (or of “ micro foundations” of macroeconomics) has been called “autistic” is because it is increasingly impossible to discuss real-world economic questions with microeconomists – and with almost all neoclassical theorists. They are trapped in their system, and don’t in fact care about the outside world any more. If you consult any microeconomic textbook, it is full of maths (e.g. Kreps or Mas-Colell, Whinston and Green) or of “tales” (e.g. Varian or Schotter), without real data (occasionally you find “examples”, or “applications”, with numerical examples – but they are purely fictitious, invented by the authors).

At first, French students got quite a lot of support from teachers and professors: hundreds of teachers signed petitions backing their movement – specially pleading for “pluralism” in teaching the different ways of approaching economics. But when the students proposed a precise program of studies, without “micro 1”, “micro 2”, “micro 3” … , without macroeconomics “with microfoundations” or with a “ representative agent ” -, almost all teachers refused, considering that is was “too much” because “students must learn all these things, even with some mathematical details”. When you ask them “why?”, the answer usually goes something like this: “Well, even if we, personally, never use the kind of ‘theory’ or ‘tools’ taught in micoreconomics courses (since we are regulationist, evolutionist, institutionalist, conventionalist, etc.) -, surely there are people who do ‘use’ and ‘apply’ them, even if it is in an ‘unrealistic’, or ‘excessive’ way”.

But when you ask those scholars who do “use these tools”, especially those who do a lot of econometrics with “representative agent” models, they answer (if you insist quite a bit): “OK, I agree with you that it is nonsense to represent the whole economy by the (intertemporal) choice of one agent – consumer and producer – or by a unique household that owns a unique firm; but if you don’t do that, you don’t do anything !”.

There are also, some microeconomists who try to prove, by experiments or by some kind of econometrics, that people act rationally. But, to do that you don’t need to know envelope theorems, compensated (Hicksian) demand or Slutsky matrix! Indeed, “experimental economics” has a very tenuous relation with “theory”: it tests very elementary ideas (about rational choice or about markets) in very simple situations – even if, in general, people don’t act as theory predicts, but that is another question.

Microeconomics: “unrealistic ” or “irrelevant” ?

Most of the time microeconomics is criticized because of it’s “lack of realism”. But “ lack of realism” doesn’t necessarily mean irrelevance ; the expression is usually understood as meaning that the theory in question is “more or less distant from reality”, or as giving a more or less acceptable proxy of reality (people differing about the quality of the approximation). The idea is implicitly this: “if we work hard, relaxing some assumptions and using more powerful mathematical theorems, microeconomics will progressively became more and more realistic. There are then – at least – some interesting concepts and results in microeconomics, that a healthy, post-autistic, economic theory should incorporate”.

That’s what Geff Harcourt implicitly says in the post-autistic economics review, no.11, when he writes:

Against this macroeconomic background, modern microeconomics has a bias towards examining the behaviour of competitive markets (as set out most fully and rigorously in the Arrow-Debreu model of general equilibrium), not as reference points but as approximations to what is actually going on. Of course, departures from them are taught, increasingly by the clever application of game theory. Moreover, the deficiencies of real markets of all sorts are examined in the light of the implications, for example, of the findings of the asymmetric information theorists (three of whom – George Akerlof, Michael Spence, and Joe Stiglitz – have just (10/10/01) been awarded this year’s Nobel Prize. From Amartya Sen on, the Nobel Prize electors seem to be back on track).

What is Harcourt saying? He is telling us that the Arrow-Debreu model has something to do with “ the behaviour of competitive markets ”; he is saying that game theory can be cleverly “applied ”; he says that there are “ findings ” made by Akerlof, Spence and Stiglitz. If all this is true, then students have to learn general equilibrium theory (as giving “ approximations to what is actually going on”), game theory, asymmetric information theory, and so on. That means that they need micro 1, micro 2, micro 3… courses (consumer and producer choice, perfect and imperfect competition, game theory, “ market failures ”, etc.).

I don’t agree at all with Geff Harcourt because:

- The Arrow-Debreu model has nothing to do with competition and markets: it is a model of a “highly centralised” economy, with a benevolent auctioneer doing a lot of things, and with stupid price-taker agents;

- Game theory cannot be “ applied ”: it only tells little “ stories ” about the possible consequences of rational individuals’ choices made once and for all and simultaneously by all of them.

- Akerlof, Spence and Stiglitz have no new “findings”, they just present, in a mathematical form, some very old ideas – long known by insurance companies and by those who organize auctions and second hand markets .

- Amartya Sen, as an economist, is a standard microeconomist (that is what he was awarded the Nobel Prize for): only the vocabulary is different (“ capabilities ”, “ functionings ”, etc.).

But, perhaps, all “post autistic” economists won’t agree with me.

It would be good then that they give their opinion and, more generally, that we try to answer, in detail, the question: Is there anything worth keeping in microeconomics – and in neoclassical theory? If there is, what?

______________________________

Bernard Guerrien is the author of La Théorie des jeux (2002), Dictionnaire d’analyse économique (2002) and La théorie économique néoclassique. macroéconomie, théorie des jeux, tome 2 (1999).

Share this:

“On the use and misuse of theories and models in economics”

Lars Pålsson Syll

Published 14 April 2015 by WEA Books

A wonderful set of clearly written and highly informative essays by a scholar who is knowledgeable, critical and sharp enough to see how things really are in the discipline, and honest and brave enough to say how things are. A must read especially for those truly concerned and/or puzzled about the state of modern economics.

Tony Lawson

Table of Contents

- Introduction

- What is (wrong with) economic theory?

- Capturing causality in economics and the limits of statistical inference

- Microfoundations – spectacularly useless and positively harmful

- Economics textbooks – anomalies and transmogrification of truth

- Rational expectations – a fallacious foundation for macroeconomics

- Neoliberalism and neoclassical economics

- The limits of marginal productivity theory

- References

About the author

Lars Pålsson Syll received a PhD in economic history in 1991 and a PhD in economics in 1997, both at Lund University, Sweden. Since 2004 he has been professor of social science at Malmö University, Sweden. His primary research areas have been in the philosophy and methodology of economics, theories of distributive justice, and critical realist social science. As philosopher of science and methodologist he is a critical realist and an outspoken opponent of all kinds of social constructivism and postmodern relativism. As social scientist and economist he is strongly influenced by John Maynard Keynes and Hyman Minsky. He is the author of Social Choice, Value and Exploitation: an Economic-Philosophical Critique (in Swedish, 1991), Utility Theory and Structural Analysis (1997), Economic Theory and Method: A Critical Realist Perspective (in Swedish, 2001), The Dismal Science (in Swedish, 2001), The History of Economic Theories (in Swedish, 4th ed. 2007), John Maynard Keynes (in Swedish, 2007), An Outline of the History of Economics (in Swedish, 2011), as well as numerous articles in scientific journals.

On dogmatism in economics

from Lars Syll

Abstraction is the most valuable ladder of any science. In the social sciences, as Marx forcefully argued, it is all the more indispensable since there ‘the force of abstraction’ must compensate for the impossibility of using microscopes or chemical reactions. However, the task of science is not to climb up the easiest ladder and remain there forever distilling and redistilling the same pure stuff. Standard economics, by opposing any suggestions that the economic process may consist of something more than a jigsaw puzzle with all its elements given, has identified itself with dogmatism. And this is a privilegium odiosum that has dwarfed the understanding of the economic process wherever it has been exercised.

Abstraction is the most valuable ladder of any science. In the social sciences, as Marx forcefully argued, it is all the more indispensable since there ‘the force of abstraction’ must compensate for the impossibility of using microscopes or chemical reactions. However, the task of science is not to climb up the easiest ladder and remain there forever distilling and redistilling the same pure stuff. Standard economics, by opposing any suggestions that the economic process may consist of something more than a jigsaw puzzle with all its elements given, has identified itself with dogmatism. And this is a privilegium odiosum that has dwarfed the understanding of the economic process wherever it has been exercised.

‘DSGE’macro models criticism: a limited round up. Part 2: market fundamentalism.

Look here for part 1 of this series, about money. Today: market fundamentalism. The posts are supposed to be succinct, to look at the issues from the angle of the economic statistician.

Today: market fundamentalism.

There are, in my view, three main strands of market fundamentalism in DSGE-macro models:

1) The idea that market prices are ‘optimal’, especially when we’re in equilibrium (whatever that is)

2) The idea that non market production, especially government production like lots of education or health care, is essentially worthless

3) The idea that markets are not just very dynamic and creative (which surprisingly does not seem to be very interesting to the DSGE economists) but that a market system leads to some kind of optimal general equilibrium or (taking the models at face value) is at least not totally inconsistent with such a situation.

Ad 1) We use purchased capital goods and durable consumer goods plus ‘intermediate inputs’ plus unpaid labour plus market exchange plus public goods to achieve our aims. Think of using you car to buy groceries. In this case, only the groceries and the gasoline used have ‘market prices’ and are included in the model. Aside: I define market prices as monetary prices which are set before a transaction takes place. ‘Shadow prices’, like the assumed monetary value of the time it takes to buy groceries, are not market prices, as no exchange between two parties takes place and as the price used to calculate the monetary value of this time might by set by an economist studying this behaviour – but it is not set ex ante by the person buying groceries. Which makes it a far cry from a real life monetary market price. The same hold for depreciation of (in this case) the car, which (though purchased as a market article) is used in a non-market, non-monetary surrounding. The costs can be calculated – but many people do not really do this, while, more important in this case, these costs are not (part of) the market price of buying groceries, as this price simply does not exist as a market price (yes, I know, the internet is changing this but the internet is also leading to the demarketing of many services). Long story short: the assumption that society is one big market and only transactions based upon market prices add to ‘social utility’ is silly and wrong (even when we could define social utility, which we can’t). Monetary market prices which, by definition, are set ex ante do guide our behaviour – but only part of it. Wonkish: ironically, it was Gary Becker (“the man who put a shadow price upon everything”) who showed that even in the case of markets and market prices you don’t have to assume rationality to derive downward sloping demand curves, which means that even if our society only consisted of markets and we were in equilibrium-la-la-land there is no deductive reason to assume that these prices are in any way optimal.

How do statisticians treat this? The National Accounts only look at the flow of monetary spending and disregard the use of consumer durables and non-paid labour. A large exception to this: housing services of owner occupied houses are seen as production, using a shadow price: the capital aspect of household labour is to a large extent covered by these accounts. Statistics of hours and time use however do exist and are used by economic statisticians, look for one example at this Levy Institute publication about labour time, unpaid labour and household production in Turkey. There clearly is no statistical reason to restrict the definition of social utility (whatever that definition is, does anybody have a source?) to market purchases.

Ad 2) Introductory remark: a much more eloquent elaboration of the same point along a somewhat different line is made by the very sharp June Sekera. According to DSGE models, a nurse paid by a private hospital does add to social utility (whatever that is) but the money earned by a nurse paid by the UK National Health Service is ‘wasteful expenditure’. You don’t believe this? It’s indeed not a necessary part of DSGE models but despite this almost all DSGE models assume this. Look here and here. Assuming this makes comparison of different countries almost impossible for economists using DSGE models, as health care is largely nationalized in a country like the UK (and therewith by assumption considered to be wasteful expenditure) while this is not the case in the USA – you really are comparing inconsistent sets of data when you restrict consumption to household consumption expenditure. Fortunately, economists have developed the concept of AIC, Actual Individual Consumption, which corrects for such differences between countries (and shows that differences in the level of consumption when corrected for these differences as well as for differences in price levels are much smaller than indicated by consumer spending). The point: there is no need at all to restrict our concept of non wasteful expenditure to market purchases. But neoclassical economists still do this – government expenditure is wasteful by definition. Even the dikes, without which my country simply would not exist, are a total waste… (mind that after every major flood (1570,1717, 1825, 1953) not just the dikes themselves were improved but the way they were financed too – more taxation and monetization and less private and local ownership and responsibility. To be clear: I totally want to discuss if private education is more effective and/or efficient than public education. But that’s not the point – according to the market fundamentalist models, public education adds nothing.

Ad 3) Do market systems tend to equilibrium? No, says Roger Farmer, who looks at the development of USA GDP (1955-2014) and discovers that there are persistent differences between the very long-term growth rate and long term growth rates. This might, however be explained by supply side changes of the economy. No, say Hendry and Mizon, who look at UK unemployment in the long run (1860-2011): there are inexplicable and large differences in the short and medium term level of unemployment. No, says Knibbe, who looks at the rate of investment in present day rich countries in the long run (1820-2010). There are very large differences in short as well as long-term rates of investment – and there does not seem to be any way in wich a market economy on its own adapts to large declines. Neither exports nor private consumption nor government consumption seems to fill the demand gap left by a decline in the investment rate in market-response way (the pattern of investment seems to be related in some way the Hendry/Mizon unemployment data). Now, somebody might succeed in explaining these empirical patterns using a DSGE model. As far as I know, this has not happened yet. The whole DSGE endeavour lacks empirical discipline and is, contrary to the scientific idea, not based upon independent estimates of variables like social utility (whatever that may be, indeed).

Share this:

from today’s Financial Times: Moribund orthodoxy grips economics departments

Bringing economics back into liberal academic life.April 16, 2015.http://www.ft.com/cms/s/0/99799262-e293-11e4-ba33-00144feab7de.html#ixzz3XTazf200

Sir, The moribund orthodoxy that currently exercises such an inflexible grip on university economics departments will, as Wolfgang Münchau comments, inevitably face a challenge, and this “will come from outside the discipline and will be brutal” (“Macroeconomists need new tools to challenge consensus”, April 13). The orthodoxy has brought this dismal prospect on itself through the brutality with which it has purged those departments of any other school of thought than its own.

Indeed, in its extreme version, the orthodoxy’s doctrine holds quite simply that there are “no schools of thought in economics”, a totalitarian assertion all too true in most economics departments today, so ruthless has been the purge of alternatives. As a result, the different approaches to economic issues of Adam Smith, Bentham, Ricardo, Marshall, Keynes, Friedman and so on are all relegated to the fringe subject of the “history of economic thought”.

This is indeed a 1984 situation, in which the very idea that debate could exist on how to approach economic issues is regarded as a mere historical memory, and consequently of purely antiquarian interest.However, economics students are increasingly demanding a pluralistic curriculum, as discussed by Martin Wolf in “Aim for enlightenment, technicalities can wait” (April 11). Similarly, the “fossilised habits of thought” entrenched in much of the economics professions are facing increasing criticism from within the academic world (see, for example, “The world no longer listens to the deaf prophets of the west”, Mark Mazower, April 14). Let us hope that all this pressure from students, from the worlds of journalism and of interdisciplinary debate, will combine to bring university economics departments back into the world of liberal academic life from which they have for so long isolated themselves.

Hugh Goodacre

University College London and University of Westminster, UK

Share this:

Mastering ‘metrics

from Lars Syll

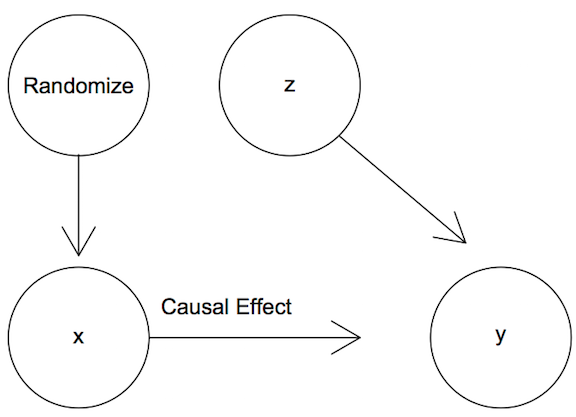

In their new book, Mastering ‘Metrics: The Path from Cause to Effect, Joshua D. Angrist andJörn-Steffen Pischke write:

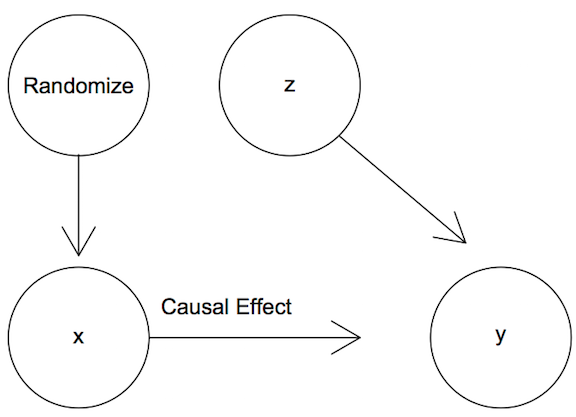

Our first line of attack on the causality problem is a randomized experiment, often called a randomized trial. In a randomized trial, researchers change the causal variables of interest … for a group selected using something like a coin toss. By changing circumstances randomly, we make it highly likely that the variable of interest is unrelated to the many other factors determining the outcomes we want to study. Random assignment isn’t the same as holding everything else fixed, but it has the same effect. Random manipulation makes other things equal hold on average across the groups that did and did not experience manipulation. As we explain … ‘on average’ is usually good enough.

Our first line of attack on the causality problem is a randomized experiment, often called a randomized trial. In a randomized trial, researchers change the causal variables of interest … for a group selected using something like a coin toss. By changing circumstances randomly, we make it highly likely that the variable of interest is unrelated to the many other factors determining the outcomes we want to study. Random assignment isn’t the same as holding everything else fixed, but it has the same effect. Random manipulation makes other things equal hold on average across the groups that did and did not experience manipulation. As we explain … ‘on average’ is usually good enough.

Angrist and Pischke may “dream of the trials we’d like to do” and consider “the notion of an ideal experiment” something that “disciplines our approach to econometric research,” but to maintain that ‘on average’ is “usually good enough” is an allegation that in my view is rather unwarranted, and for many reasons.

First of all it amounts to nothing but hand waving to simpliciter assume, without argumentation, that it is tenable to treat social agents and relations as homogeneous and interchangeable entities.

Randomization is used to basically allow the econometrician to treat the population as consisting of interchangeable and homogeneous groups (‘treatment’ and ‘control’). The regression models one arrives at by using randomized trials tell us the average effect that variations in variable X has on the outcome variable Y, without having to explicitly control for effects of other explanatory variables R, S, T, etc., etc. Everything is assumed to be essentially equal except the values taken by variable X.

Randomization is used to basically allow the econometrician to treat the population as consisting of interchangeable and homogeneous groups (‘treatment’ and ‘control’). The regression models one arrives at by using randomized trials tell us the average effect that variations in variable X has on the outcome variable Y, without having to explicitly control for effects of other explanatory variables R, S, T, etc., etc. Everything is assumed to be essentially equal except the values taken by variable X.

In a usual regression context one would apply an ordinary least squares estimator (OLS) in trying to get an unbiased and consistent estimate:

Y = α + βX + ε,

where α is a constant intercept, β a constant “structural” causal effect and ε an error term.

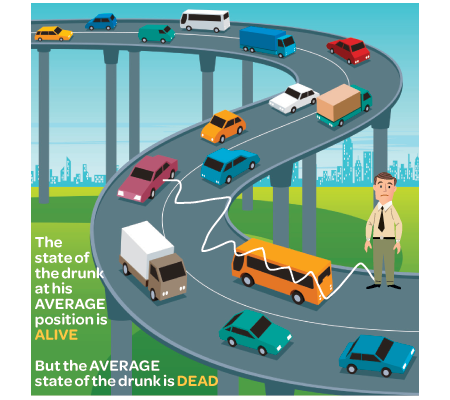

The problem here is that although we may get an estimate of the “true” average causal effect, this may “mask” important heterogeneous effects of a causal nature. Although we get the right answer of the average causal effect being 0, those who are “treated”( X=1) may have causal effects equal to – 100 and those “not treated” (X=0) may have causal effects equal to 100. Contemplating being treated or not, most people would probably be interested in knowing about this underlying heterogeneity and would not consider the OLS average effect particularly enlightening.

Limiting model assumptions in economic science always have to be closely examined since if we are going to be able to show that the mechanisms or causes that we isolate and handle in our models are stable in the sense that they do not change when we “export” them to our “target systems”, we have to be able to show that they do not only hold under ceteris paribus conditions and a fortiori only are of limited value to our understanding, explanations or predictions of real economic systems.

Real world social systems are not governed by stable causal mechanisms or capacities. The kinds of “laws” and relations that econometrics has established, are laws and relations about entities in models that presuppose causal mechanisms being atomistic and additive. When causal mechanisms operate in real world social target systems they only do it in ever-changing and unstable combinations where the whole is more than a mechanical sum of parts. If economic regularities obtain they do it (as a rule) only because we engineered them for that purpose. Outside man-made “nomological machines” they are rare, or even non-existant. Unfortunately that also makes most of the achievements of econometrics – as most of contemporary endeavours of mainstream economic theoretical modeling – rather useless.

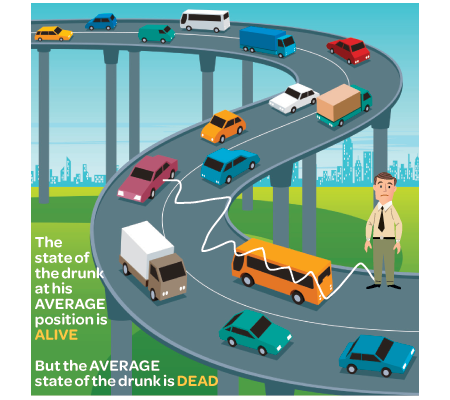

Remember that a model is not the truth. It is a lie to help you get your point across. And in the case of modeling economic risk, your model is a lie about others, who are probably lying themselves. And what’s worse than a simple lie? A complicated lie.

Sam L. Savage The Flaw of Averages

When Joshua Angrist and Jörn-Steffen Pischke in an earlier article of theirs [“The Credibility Revolution in Empirical Economics: How Better Research Design Is Taking the Con out of Econometrics,” Journal of Economic Perspectives, 2010] say that

anyone who makes a living out of data analysis probably believes that heterogeneity is limited enough that the well-understood past can be informative about the future

I really think they underestimate the heterogeneity problem. It does not just turn up as anexternal validity problem when trying to “export” regression results to different times or different target populations. It is also often an internal problem to the millions of regression estimates that economists produce every year.

But when the randomization is purposeful, a whole new set of issues arises — experimental contamination — which is much more serious with human subjects in a social system than with chemicals mixed in beakers … Anyone who designs an experiment in economics would do well to anticipate the inevitable barrage of questions regarding the valid transference of things learned in the lab (one value of z) into the real world (a different value of z) …

Absent observation of the interactive compounding effects z, what is estimated is some kind of average treatment effect which is called by Imbens and Angrist (1994) a “Local Average Treatment Effect,” which is a little like the lawyer who explained that when he was a young man he lost many cases he should have won but as he grew older he won many that he should have lost, so that on the average justice was done. In other words, if you act as if the treatment effect is a random variable by substituting βt for β0 + β′zt, the notation inappropriately relieves you of the heavy burden of considering what are the interactive confounders and finding some way to measure them …

Absent observation of the interactive compounding effects z, what is estimated is some kind of average treatment effect which is called by Imbens and Angrist (1994) a “Local Average Treatment Effect,” which is a little like the lawyer who explained that when he was a young man he lost many cases he should have won but as he grew older he won many that he should have lost, so that on the average justice was done. In other words, if you act as if the treatment effect is a random variable by substituting βt for β0 + β′zt, the notation inappropriately relieves you of the heavy burden of considering what are the interactive confounders and finding some way to measure them …

If little thought has gone into identifying these possible confounders, it seems probable that little thought will be given to the limited applicability of the results in other settings.

Ed Leamer

Evidence-based theories and policies are highly valued nowadays. Randomization is supposed to control for bias from unknown confounders. The received opinion is that evidence based on randomized experiments therefore is the best.

More and more economists have also lately come to advocate randomization as the principal method for ensuring being able to make valid causal inferences.

I would however rather argue that randomization, just as econometrics, promises more than it can deliver, basically because it requires assumptions that in practice are not possible to maintain.

Especially when it comes to questions of causality, randomization is nowadays considered some kind of “gold standard”. Everything has to be evidence-based, and the evidence has to come from randomized experiments.

But just as econometrics, randomization is basically a deductive method. Given the assumptions (such as manipulability, transitivity, separability, additivity, linearity, etc.) these methods deliver deductive inferences. The problem, of course, is that we will never completely know when the assumptions are right. And although randomization may contribute to controlling for confounding, it does not guarantee it, since genuine ramdomness presupposes infinite experimentation and we know all real experimentation is finite. And even if randomization may help to establish average causal effects, it says nothing of individual effects unless homogeneity is added to the list of assumptions. Real target systems are seldom epistemically isomorphic to our axiomatic-deductive models/systems, and even if they were, we still have to argue for the external validity of the conclusions reached from within these epistemically convenient models/systems. Causal evidence generated by randomization procedures may be valid in “closed” models, but what we usually are interested in, is causal evidence in the real target system we happen to live in.

When does a conclusion established in population X hold for target population Y? Only under very restrictive conditions!

Angrist’s and Pischke’s “ideally controlled experiments” tell us with certainty what causes what effects — but only given the right “closures”. Making appropriate extrapolations from (ideal, accidental, natural or quasi) experiments to different settings, populations or target systems, is not easy. “It works there” is no evidence for “it will work here”. Causes deduced in an experimental setting still have to show that they come with an export-warrant to the target population/system. The causal background assumptions made have to be justified, and without licenses to export, the value of “rigorous” and “precise” methods — and ‘on-average-knowledge’ — is despairingly small.

Share this:

Model validation and significance testing

from Lars Syll

In its standard form, a significance test is not the kind of “severe test” that we are looking for in our search for being able to confirm or disconfirm empirical scientific hypothesis. This is problematic for many reasons, one being that there is a strong tendency to accept the null hypothesis since they can’t be rejected at the standard 5% significance level. In their standard form, significance tests bias against new hypotheses by making it hard to disconfirm the null hypothesis.

And as shown over and over again when it is applied, people have a tendency to read “not disconfirmed” as “probably confirmed.” Standard scientific methodology tells us that when there is only say a 10 % probability that pure sampling error could account for the observed difference between the data and the null hypothesis, it would be more “reasonable” to conclude that we have a case of disconfirmation. Especially if we perform many independent tests of our hypothesis and they all give about the same 10 % result as our reported one, I guess most researchers would count the hypothesis as even more disconfirmed.

And as shown over and over again when it is applied, people have a tendency to read “not disconfirmed” as “probably confirmed.” Standard scientific methodology tells us that when there is only say a 10 % probability that pure sampling error could account for the observed difference between the data and the null hypothesis, it would be more “reasonable” to conclude that we have a case of disconfirmation. Especially if we perform many independent tests of our hypothesis and they all give about the same 10 % result as our reported one, I guess most researchers would count the hypothesis as even more disconfirmed.

Most importantly — we should never forget that the underlying parameters we use when performing significance tests are model constructions. Our p-values mean next to nothing if the model is wrong. As eminent mathematical statistician David Freedman writes:

Most importantly — we should never forget that the underlying parameters we use when performing significance tests are model constructions. Our p-values mean next to nothing if the model is wrong. As eminent mathematical statistician David Freedman writes:

I believe model validation to be a central issue. Of course, many of my colleagues will be found to disagree. For them, fitting models to data, computing standard errors, and performing significance tests is “informative,” even though the basic statistical assumptions (linearity, independence of errors, etc.) cannot be validated. This position seems indefensible, nor are the consequences trivial. Perhaps it is time to reconsider.

Randomization is used to basically allow the econometrician to treat the population as consisting of interchangeable and homogeneous groups (‘treatment’ and ‘control’). The regression models one arrives at by using randomized trials tell us the average effect that variations in variable X has on the outcome variable Y, without having to explicitly control for effects of other explanatory variables R, S, T, etc., etc. Everything is assumed to be essentially equal except the values taken by variable X.

Randomization is used to basically allow the econometrician to treat the population as consisting of interchangeable and homogeneous groups (‘treatment’ and ‘control’). The regression models one arrives at by using randomized trials tell us the average effect that variations in variable X has on the outcome variable Y, without having to explicitly control for effects of other explanatory variables R, S, T, etc., etc. Everything is assumed to be essentially equal except the values taken by variable X.